Products

Your all-inclusive solution

All Products

Download our catalog

Get our Catalog

Applications

An EMCCD camera, designed from the ground up for extreme faint flux imaging, is presented. CCCP, the CCD Controller for Counting Photons, has been integrated with a CCD97 EMCCD from e2v technologies into a scientific camera at the Laboratoire d’Astrophysique Expérimentale (LAE), Université de Montréal. This new camera achieves subelectron readout noise and very low clock-induced charge (CIC) levels, which are mandatory for extreme faint flux imaging. It has been characterized in laboratory and used on the Observatoire du Mont Mégantic 1.6 m telescope. The performance of the camera is discussed and experimental data with the first scientific data are presented.

The advent of electron-multiplying charge-coupled devices (EMCCD) allows subelectron readout noise to be achieved. However, the multiplication process involved in rendering this low noise level is stochastic. The statistical behavior of the gain that is generated by the electron multiplying register adds an excess noise factor (ENF) that reaches a value of 21=2 at high gains (Stanford & Hadwen 2002). The effect on the signal-tonoise ratio (SNR) of the system is the same as if the quantum efficiency (QE) of the EMCCD were halved. In this regime, the EMCCD is said to be in analog mode (AM) operation.

Some authors proposed offline data processing to lower the impact of the ENF (Lantz et al. 2008; Basden et al. 2003) in AM operation. However, one can overcome the ENF completely, without making any assumption on the signal’s stability across multiple images, only by considering the pixel binary and by applying a single threshold to the pixel value. The pixel will be considered as having detected a single photon if its value is higher than the threshold and none if it is lower. In this way, the SNR will not be affected by the ENF and the full QE of the EMCCD can be recovered. In this regime, where the EMCCD is said to be in photon-counting (PC) operation, the highest observable flux rate will be dictated by the rate at which the images are read out; a frame rate that is too low will induce losses by coincidence.

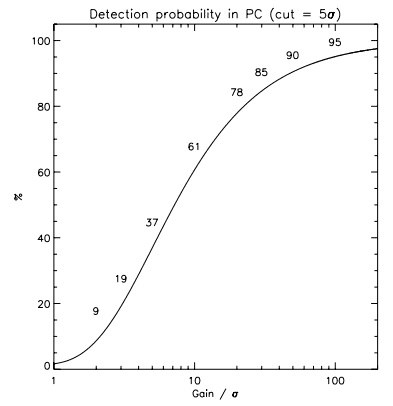

However, at a high frame rate, the clock-induced charges (CIC) become dominant over the other sources of noise affecting the EMCCD (mainly dark noise). CIC levels in the range of 0.01–0.1 were typically measured (Tulloch 2008; Wen et al. 2006; e2v Technologies 2004) on a 512 × 512 CCD97 frame transfer EMCCD from e2v Technologies. Even at a low readout speed of 1 frame s-1, these CIC levels are at least an order of magnitude higher than the dark noise. Thus, one wanting to do faint flux imaging with an EMCCD is stuck with two conflicting problems: a low frame rate is needed to lower the impact of the CIC while a high frame rate is needed if a reasonable dynamic range is to be achieved.

In order to make faint flux imaging efficient with an EMCCD, the CIC must be reduced to a minimum. Some techniques have been proposed to reduce the CIC (Tulloch 2006; Daigle et al. 2004; Mackay et al. 2004; Gach et al. 2004; e2v Technologies 2004; Janesick 2001) but until now, no commercially available CCD controller nor commercial cameras were able to implement all of them and get satisfying results. CCCP, the CCD Controller for Counting Photons, has been designed with the aim of reducing the CIC generated when an EMCCD is read out. It is optimized for driving EMCCDs at high speed (≥10 MHz ), but may be used also for driving conventional CCDs (or the conventional output of an EMCCD) at high, moderate, or low speed. This new controller provides an arbitrary clock generator, yielding a timing resolution of ∼20 ps and a voltage resolution of ∼2 mV of the overlap of the clocks used to drive the EMCCD. The frequency components of the clocks can be precisely controlled, and the interclock capacitance effect of the CCD can be nulled to avoid overshoot and undershoots. Using this controller, CIC levels as low as 0.001–0.002 event pixel-1 per frame were measured on the 512 × 512 CCD97 operating in inverted mode. A CCD97 driven by CCCP was placed at the focus of the FaNTOmM instrument (Gach et al. 2002; Hernandez et al. 2003) to replace its GaAs photocathode-based Image Photon Counting System (IPCS). In this article, the important aspects of PC and AM operations with an EMCCD under low fluxes are outlined in § 2. In § 3, CCCP performance regarding these aspects is presented. Finally, in § 4, scientific results obtained at the telescope are presented.

2.1. The Cost of Subelectron Readout Noise

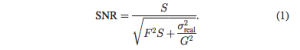

The multiplication process that allows an EMCCD to reach subelectron readout noise is unfortunately stochastic. Only the mean gain is known and it is not possible to know the exact gain that was applied to a pixel’s charge. This uncertainty on the gain and thus on the determination of the quantity of electrons that were accumulated in the pixel causes errors on the photometric measurements. This uncertainty can be translated in a SNR equation as an ENF, F, as follows

Here, S is the quantity of electrons that were acquired and G the mean gain achieved by the multiplication register. At high gain, F2 reaches a value of 2 and its effect on the SNR is the same as if the QE of the EMCCD was halved (Stanford & Hadwen 2002).

The effect of the ENF on the output probability of the EM stage of the EMCCD is outlined by Figure 1, left panel. The overlapping output probabilities of different input electrons is the result of the ENF. Its impact on the SNR is shown in the right panel. The plateau at 0.707 of relative SNR is the effect of an ENF of value √2. This figure also compares the relative SNR of a conventional CCD (G = 1) operated at 2 and 10 electrons of readout noise.

Thus, for fluxes lower than ∼5 photons pixel-1 per image, the EMCCD in AM operation outperforms the low-noise (σ = 2 electrons) conventional CCD. This does not take into account the duty cycle loss induced by the low speed readout of the lownoise CCD. Since most of the EMCCDs actually available are of frame transfer type, virtually no integration time is lost due to the readout process and this advantage would be even greater for the EMCCD compared to the conventional CCD. In AM operation, the maximum observable flux per image is a function of the EM gain of the EMCCD and the integration time should be chosen to avoid saturation.

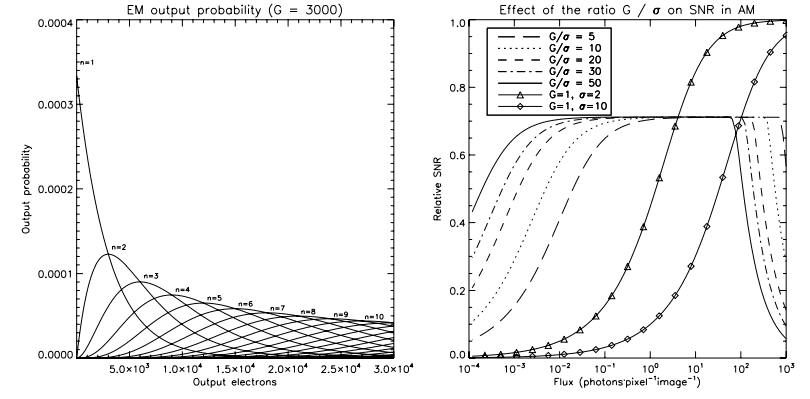

This ENF affects the SNR only when one wants to measure more than 1 photon pixel-1. If one assumes that no more than one photon is to be accumulated, it can consider the pixel as being empty if the output value is lower than a given threshold, or filled by one photon if the output value is higher than the threshold. The threshold is determined solely by the real readout noise of the EMCCD. Typically, a threshold of 5σ allows one to avoid counting false events due to the readout noise (see Fig. 2, left panel). Thus, even if the exact gain is still unknown, it has no impact since it does not enter in the equation of the output value. In this operating mode, the ENF, F, vanishes by taking a value of 1 (Stanford & Hadwen 2002; Daigle et al. (2006a).

Counting only 1 photon pixel-1 does have its drawbacks: at high fluxes, coincidence losses become important (Fig. 2, right panel, continuous and dashed line). The only way of overcoming the coincidence losses is to operate the EMCCD at a higher frame rate. In order to lose no more than 10% of the photons by coincidence losses, the frame rate must be at least 5 times higher than the photon flux. This requires that the EMCCD be operated at high speed (typically ≥10 MHz ) in order to allow moderate fluxes to be observed.

2.2. The Need for High EM Gain

Figure 2 gives a hint of another aspect of the PC operation: the ratio of the gain over the real readout noise is important. Since PC operation involves applying a threshold that is chosen as a function of the real readout noise, the EM gain that is applied to the pixel’s charge will affect the amount of events that can be counted. Figure 1 shows that for 1 input electron the highest output probability of the EM register is…1 electron. This causes an appreciable proportion of events ending up buried in the readout noise. The proportion of events lost in the readout noise, el, which is the proportion of the events that come out of the EMCCD at a value lower than cut electrons, can be estimated by means of the following convolution

![]()

where f(n, λ) is the Poissonian probability of having n photons during an integration period under a mean flux of λ (in photons per pixel per frame) and p(x, n, G) is the probability of having x output electrons when n electrons are present at the input of the EM stage at a gain of G. This probability is defined by

![]()

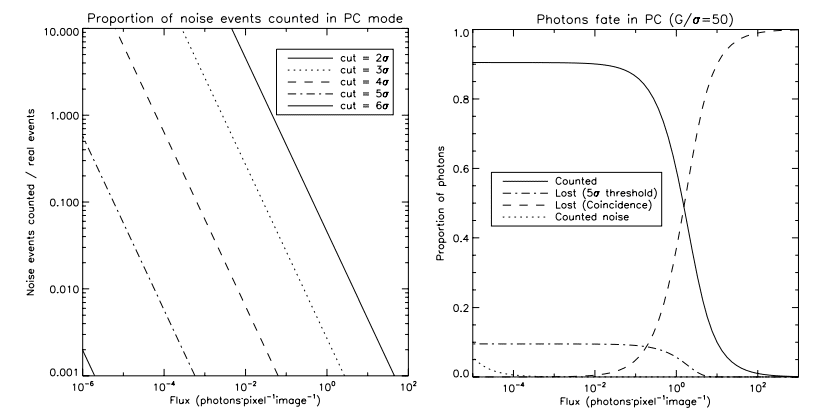

Figure 3 translates the importance of having a high gain over readout noise ratio into a detection probability as a function of the G=σ ratio. An EMCCD that is operated at an EM gain of 1000 and has a readout noise of 50 electrons will allow no more than 78% of the photons to be counted. In order to count 90% of the photons, one would have to use an EM gain of 2500 for the same readout noise. It is the ratio of the gain over the real readout noise that sets the maximum amount of detected photons. Thus, even if an EMCCD is capable of subelectron readout noise, its effect is not completely suppressed. The readout noise still affect the quality of the data if the EM gain is not high enough.

2.3. The Dominance of Clock-Induced Charges

When an EMCCD is operated under low fluxes at high gain and high frame rate, a noise source that is usually buried into the readout noise of a conventional CCD quickly arises: the CIC (e2v Technologies 2004). The CIC are charges that are generated as the photoelectrons are moved across the CCD to be read out. The CIC noise may appear as dark noise except that it has no time component. It is only when the CCD is read out that the CIC occur. Thus, the higher the frame rate, the higher the CIC.

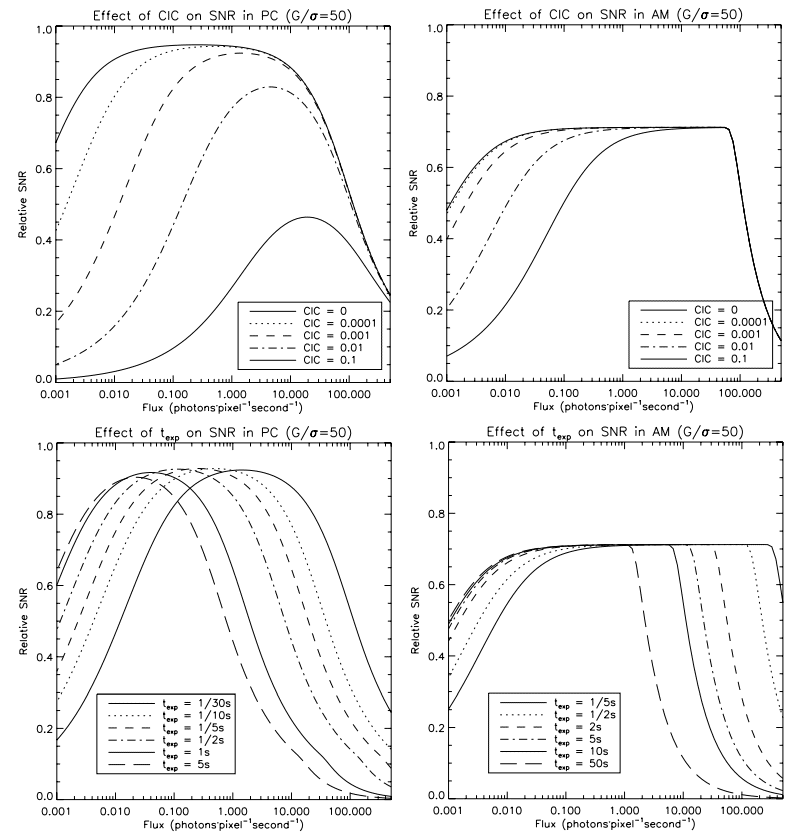

Figure 4 shows the effect of the CIC on the SNR of an EMCCD. One quickly realizes that, at 30 fps, the CIC dominate over the dark noise even for a CIC level as low as 0.0001 electron per pixel per image (top left panel). In AM, at 1 fps, the CIC dominate for levels higher than 0.001 (top right panel). In order to lower the impact of the CIC, one could chose to operate the EMCCD at a lower speed (higher integration time). The effect of this choice is outlined in the bottom panels of the figure (assuming a CIC level of 0.001). It shows that in PC and AM mode, there is little advantage of operating the EMCCD at less than ∼0:5 fps. For a given CIC level, the maximum exposure time should be dictated by the time needed for the dark noise to dominate (about twice as high as the CIC). Moreover, the losses due to saturation (in AM) or by coincidence (in PC) are becoming more important, and the rising of the integrating time reduces the dynamic range of the images without providing a gain in SNR at low flux. Thus, there is a minimum frame rate at which the EMCCD should be operated, either in AM or PC. For a lower frame rate, there is no further gain in SNR to achieve at low fluxes, and losses occur at high fluxes.

Another problem with the CIC is that it is dependent of the EM gain (see § 3.2). The higher the EM gain, the higher the rate at which the CIC is generated. Thus, in order to reach the high EM gain needed for faint flux imaging (30–50 times the readout noise), one must really tame the CIC down to low levels.

The camera built using CCCP and a CCD97 (hereafter CCCP/CCD97) was used to gather experimental data and to compare the performance of the CCCP controller against an existing, commercial, CCD97 camera (namely the, Andor iXonEM + 897 BI camera, hereafter the Andor camera). The CCCP/CCD97 performance is also presented in absolute numbers. All the data presented, for both cameras, were gathered at a pixel rate of 10 MHz.

3.1. Real and Effective Readout Noise

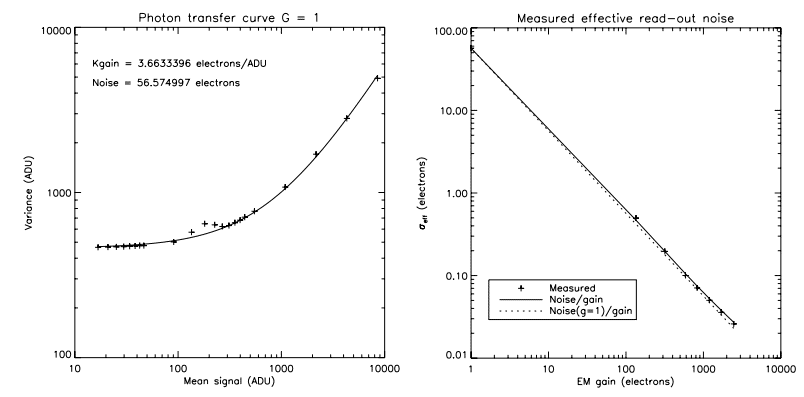

The real readout noise of CCCP/CCD97 was measured at 10 MHz of pixel rate using the photon transfer curve method with a faint, constant illumination and a varying integration time. For this technique, the variance of the images is plotted against the mean signal (read (Janesick 2001 for a detailed explanation of the technique). This technique yields both the readout noise and the reciprocal gain (electrons ADU-1) of the CCD.

Figure 5 shows the measurement for both the real and the effective readout noise of CCCP /CCD97. The various plots of the left panel show that the real readout noise is not dependent of the EM gain. Indeed, there is no reason why the readout noise of the EMCCD could not be affected by the EM gain. The clocking of the high-voltage (HV) clock could induce substrate bounce, EMI, or cross-talk and increase the real readout noise. Moreover, the reciprocal gain of the charge domain (after amplification) of the EMCCD could also change if the output amplifier, for example, was not linear. The good concordance of the three data sets of the left panel shows that the real readout noise and the reciprocal gain of the EMCCD are stable. One can assume that the effective readout noise of the CCCP/CCD97 can be calculated by means of

![]()

where σG=1 is the readout noise calculated at an EM gain of 1 and G is the gain at which the EMCCD is operated.

3.2. Clock-Induced Charges

In order to measure the CIC generated during a readout of the EMCCD, many (∼1000) dark frames were acquired. These frames were exposed for the shortest period of time (to minimize the dark noise) and in total darkness6 . Then, the histogram of all the frames is fitted with the EM output probability equation (eq. [3]), assuming that n is always 1. For very low event rates (in this case, ≃0:003 event pixel-1 per frame), this assumption has little effect on the CIC measurement. This gives at the same time the EM gain, G, and the mean quantity of events per pixel. The histogram fitting has also the advantage of seeing all the events that are generated in the image area and storage area and in the conventional horizontal register, even the ones buried in the readout noise. This is mandatory if the CIC levels are to be compared at different EM gains. Obviously, simply counting the events that are at a 5σ threshold would yield lower CIC levels for lower gains as more events would end up in the readout noise (recall Fig. 3). It is beyond the scope of this article to describe the algorithm in detail 7 . This code also calculates the mean bias (CIC + dark free) of all the frames and gives the real readout noise. The bias calculated by the routine can then be used to remove the bias from light frames.

When a zero integration time is used, or when both the image and storage regions of the CCD are flushed prior to the readout (dump of the lines through the Dump Gate), the dark signal generated during the readout can further be removed by fitting a slope of the mean signal from the first line read to the last one. This yields the dark count rate per readout line. This allows the CIC to be completely disentangled from the dark noise.

The Andor camera is advertised as having a CIC + dark level of 0.005 electron pixel-1 per image8 at an EM gain of 1000 for a 30 ms integration time at -85°C. The CIC + dark noise rate measured on such a camera, using the histogram fitting algorithm, is 0.0084. This discrepancy may come in part from the fact that the measurement method used in this article measures all the events instead of counting only the events that are above 5σ (which is the way Andor is characterizing its cameras, as stated in their datasheets). This camera is not capable of higher EM gains and it is thus not possible to measure the CIC at higher gains. Obtaining a higher EM gain is just a matter of producing an HV clock that has a higher amplitude. The Andor camera could probably be used at higher EM gain but it is limited by software at 1000. However, as it will be shown in § 3.4, the higher the EM gain, the higher the sensitivity of the EM gain to the amplitude of the HV clock. Thus, greater gain must come with greater stability of the HV clock. Moreover, a higher EM gain means a higher CIC level.

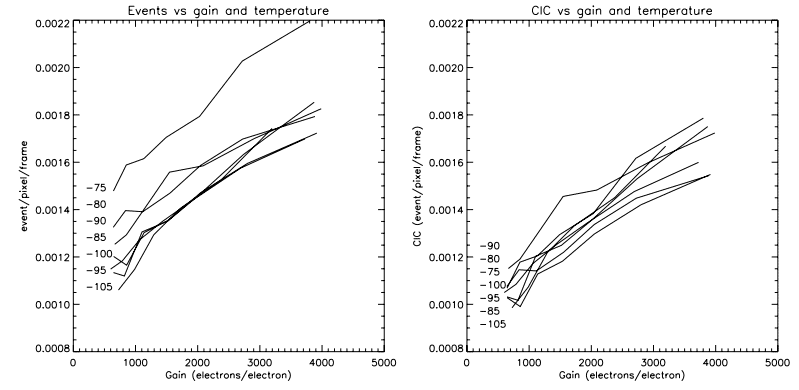

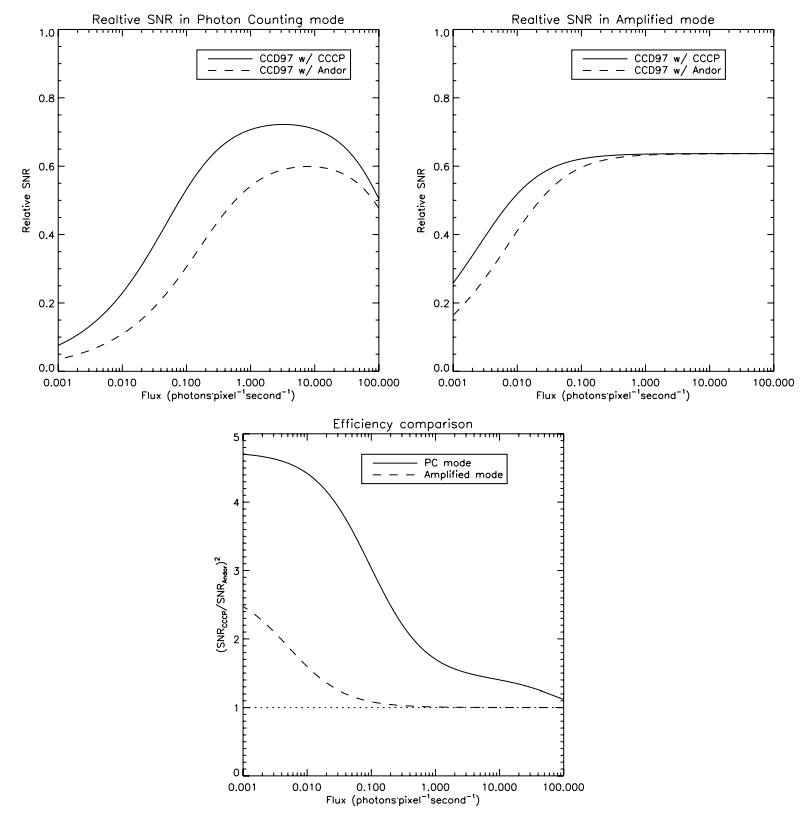

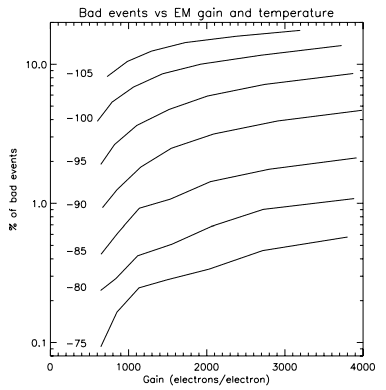

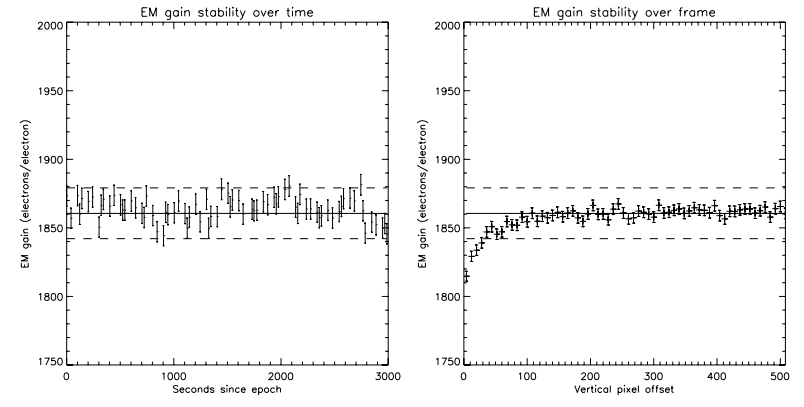

Figure 6 shows the level of CIC that was measured on CCCP/ CCD97 at different operating temperatures. At an EM gain of 1000, the amount of events attributable to the CIC is of the order of 0.001 electron pixel-1 per image. At an EM gain of 2500, the CIC level reaches 0.0014–0.0018. This figure also shows that the CIC is not, or is at most weakly, dependent on the operating temperature. Figure 7 compares the SNR of the CCD97 driven by CCCP and the Andor camera, using the EM gain and CIC levels measured.

3.3. Charge Transfer Efficiency

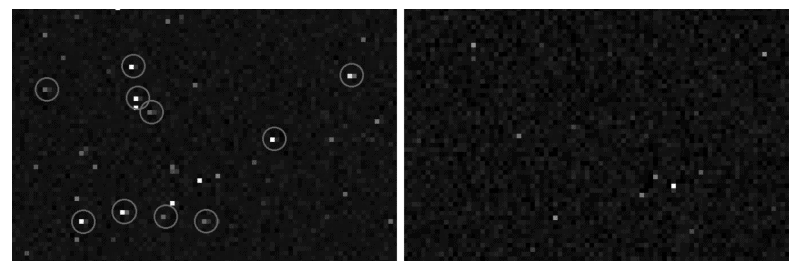

Having a high gain, a low CIC, and a low real readout noise are not the only factors that are needed to render the faint flux imaging efficient. The charge transfer efficiency (CTE) of the EM register, that is, the efficiency at which the electrons are moved across the EM register, plays an important role. If this CTE is too low, some of the electrons composing a pixel will not be transferred when they should be and they will lag in the following pixels. In images, these lagging electrons will be seen as a tail lagging behind a bright pixel. Figure 8 shows the effect of an EM CTE that is not optimum (left panel, circled events). These leaking pixels are polluting adjacent pixels by raising their value. They can then be counted as pixels being stroked by a photon when they were not. This creates a source of noise.

For means of comparison, one could define a scenario that would allow these bad pixels to be identified and counted. For example, one could use dark frames, expected to count only dark and CIC events. If, say, the rate of events in these images is 0.01 event pixel-1 per image, there is, assuming a Poissonian process for the generation of the dark and CIC, only 1% chance that an event would immediately follow another. By counting the quantity of events that are adjacent, it is possible to know the quantity of events that are due to the bad CTE.

In the left panel of Figure 8, the mean CIC þ dark rate measured is 0.0084 event pixel-1 per frame.9 Thus, no more than 0.84% of the events should be adjacent. However, 5.5% of the events immediately follow one another. The excess in adjacent events (4.6%) must come from the bad CTE. In other words, given any event in an image (dark, CIC, or photoelectron), there is a 4.6% chance that it will be followed by a bad event.

In the left panel of Figure 8, the bad event rate is measured to be 0.46%, where the expected rate is 0.18%. Thus, one can tell that at -85°C and at a gain over readout noise ratio of 22, there are only ∼0:3% bad events with the CCCP/CCD97 camera. Thus, even if CCCP’s EM gain is higher than that of the Andor camera, the first has a much lower bad event rate, which means that the CTE figure of the EMCCD can be better handled with CCCP.

The deterioration of the CTE increases with both the EM gain and the low temperature of the CCD (Fig. 9). Adjustments to the clocking (clock overlap voltages, clock frequency components, clock high and low levels, etc.) were performed after these measurements were made and better CTE figures are now accomplished, as can be seen in Figure 8. However, the behavior of the CTE as a function of the temperature and gain is maintained: the bad event rate rises with the EM gain and with lower temperatures.

3.4. Gain Stability

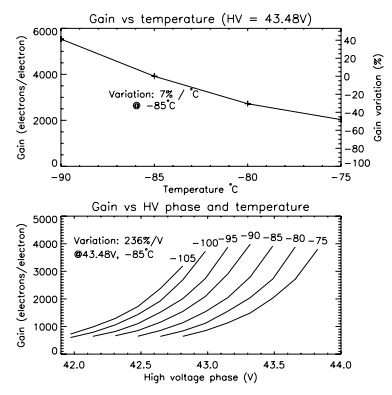

The stability of the gain of an EMCCD is important as it will affect the photometric measurements. The gain of the EM register is very sensitive to the variations of the HV clock. The higher the gain, the higher the sensitivity to the HV clock amplitude (Fig. 10, bottom panel). At a high gain, a variation of 1 V in the amplitude of the HV clock represents more than a twofold variation of the EM gain. This means that in order to achieve a gain stability of the order of a percent, the HV clock must be stable within ∼5 mV. The EM register is also sensitive to the temperature (Fig. 10, top panel). In the EM gain regime shown, the temperature must be controlled within &0:1°C in order to achieve a gain stability of ±1%.

3.4.1. Gain Stability over Time

The stability of the EM gain of CCCP/CCD97 has been measured by taking dark images continuously at high gain (G/σ ≃ 30), moderate frame rate (10 fps), and at a temperature of -85°C. In total, 30,000 images were acquired. Then, the EM gain of these images was determined using the usual algorithm. The algorithm used a window of 400 frames out of the 30,000 and slid 400 frames every iteration. Thus, 75 data points were produced, each representing about 40 s. The results are shown in Figure 11, left panel. The error bars in this figure are calculated using

![]()

where G is the EM gain measured and n is the quantity of events that were used to obtain G. The constant 2 is used to account for the ENF of the EMCCD.

The gain variation over time is thought to be due to the variation of the temperature, rather than the variation of the HV clock amplitude. The temperature controller used to gather these data has an accuracy slightly worse than ±0.1°C.

3.4.2. Gain Stability over Frame

The EM gain must also be stable through an image or, at least, its variation must be well characterized. EM gain variation may come from the HV clock amplitude which can take some time to stabilize at the beginning of the frame, given that the HV clock is turned off during the integration time. Even if the HV clock is kept running during the integration time, the capacitive coupling between it and the conventional horizontal clocks can induce a variation of the HV amplitude when they are activated and, consequently, a variation in the gain.

The 30,000 frames used in § 3.4.1 were used once again to measure the gain over the image. The EM gain was determined on a per-line basis rather than on an image basis. Then, lines were binned by 8 to increase the SNR (given eq. [5]). This yields the right panel of figure 11.

One can see that the HV clock takes about 32 lines to stabilize within the &1% gain variation. The HV clock was stopped during the exposure time of these frames. This gain variation is stable, which can be taken into account while processing the raw data to yield accurate photometric measurements.

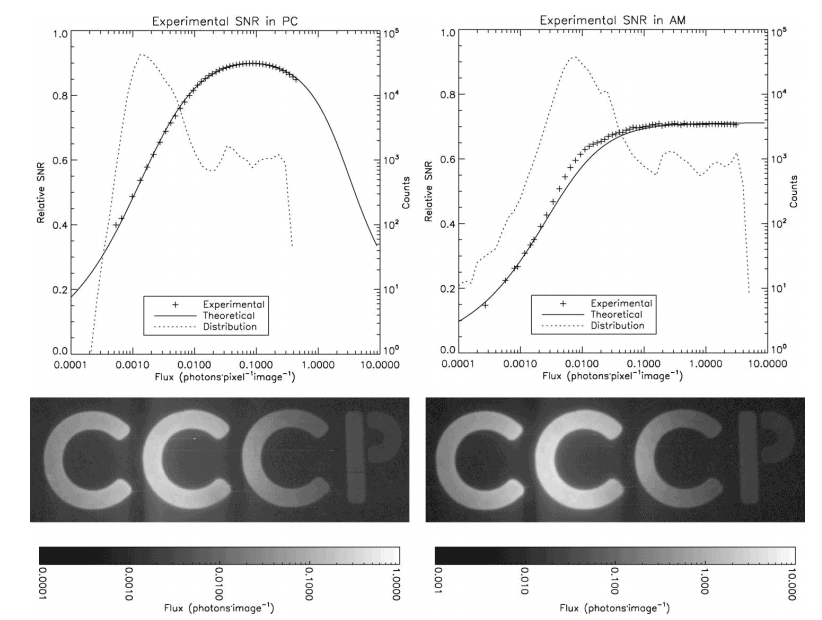

3.5. Experimental SNR

Experimental SNR curves were obtained in the lab. In order to do so, a scene spanning about 3 orders of magnitude in contrast has been observed (figure 12, bottom panels). Two acquisitions were performed: PC and AM, where the exposure time was set to 0.05 s and 0.5 s, respectively.

Even though care has been taken to put the lab in total darkness, the background of the scene was still very faintly lit (about 0:02 photon pixel-1s-1), which explains the background signal. In total, 86,000 images with an exposure time of 0.05 s and 8600 images with an exposure time of 0.5 s were acquired. The same amount of dark frames having the same exposure time were also acquired to remove the CIC þ dark component. The dark frames were used to calculate the effective EM gain, by histogram fitting (see § 3.2). The mean bias has been extracted from the dark frames and has been used to subtract the bias of all the light frames.

As for any CCCP data acquisition, the raw data coming out of CCCP during this experiment were stored in fits files during the acquisition and these files are then processed off-line. The resulting SNR curves are presented in Figure 12. The frames where a cosmic ray was detected were simply removed. This accounted for about 0.15% of the frames in PC and for about 1.5% in AM.

3.5.1. PC Processing

The PC frames were processed with a threshold of 5σ. However, the sum of the PC frames, minus the dark frames, gives only the amount of counted photons, cp, whereas one wants to know the sum of incident photons, f. There are two possible sources of losses:

1. The threshold, which is responsible for the loss of the events not amplified enough and ending up in the readout noise;

2. The events that are lost due to coincidence. The proportion of the events lost due to the threshold, el, is given by equation (2) if one uses cp as λ. The values of G and cut were, in this case, 30σ and 5σ, respectively. Thus, the correction can be made simply by dividing the cp by 1 - el . This gives the amount of detected photons, dp.

The coincidence losses can be modeled by simple Poissonian statistics. If the amount of incident photons, f, is unknown, the probability of having zero photon is known. This is simply 1 - dp. Thus, 1 - dp represents the probability of having zero photons under a flux of f, and f can be recovered by means of

![]()

This correction, however, induces an excess noise that scales as

![]()

At low flux, Fc approaches the value of 1. It is only under moderate fluxes, where coincidence losses become important, that Fc prevails.

Now, one can calculate the effective SNR of the image for every pixel. The SNR is given by

where N is the variance of the pixel over all the 86,000 frames. This SNR curve can then be compared to the curve of a perfect PC system whose noise would be only the shot noise, which is SNR = √f . This gives the experimental points shown in Figure 12, left panel. The theoretical curve is given by

![]()

where S is the incident flux, and D is the mean CIC + dark rate (the mean of all the dark frames). The term g is the excess noise induced by the coincidence loss and its correction that are implied by the PC processing

![]()

In this case, D is 0.0023 event pixel-1 per image. Of course, all of these corrections do not take into account the photons lost due to the QE. However, there is nothing CCCP can do about it and the plots are made according to a perfect photon counting system that would have the same QE as the CCD97.

The agreement between the theoretical and the experimental curves for the PC processing is nearly perfect (Fig. 12, top left panel). The image resulting from the averaging of all 86,000 frames is shown in the bottom left panel of the figure.

3.5.2. AM Processing

The case of the AM processing is simpler. The flux in a pixel is given by dividing its value by the EM gain at which the image was acquired. The mean flux of a pixel, f, is simply given by the mean value of the same pixel across all the images minus the dark signal measured for that pixel. The SNR of a pixel is simply given by

![]()

where N is the variance of that pixel through all of the AM frames. When this SNR curve is normalized by that of a perfect photon-counting system, this gives the experimental curve shown in Figure 12, right panel.

The theoretical curve of that figure is obtained by taking into account the ENF, F, that plagues the EMCCD. Thus, the SNR equation is given by

![]()

where σeff is the effective readout noise. Since the EM gain is high (30σ), F2 takes the value of 2. The CIC + dark, d, is 0.0025 in that plot.

In order to reach very faint fluxes with the AM processing, the bias needs to have an extremely high SNR. There must be as many frames used to build the bias as there are light frames to process. If not, since the same bias is used for all the light frames, the noise of the bias will become the dominant source of noise in the final images. The PC processing is not as sensitive as the AM processing to the SNR of the bias. In PC, the bias’s noise will be removed by the threshold applied to the data.

The plots also shows that the EM gain is accurately determined, which is critical for AM processing. The experimental AM plot would shift up or down if the EM gain were underestimated or overestimated, respectively.

3.5.3. Comments

A result of this experiment that might have struck an attentive reader is the higher than expected CIC + dark rate in PC. On measurements made with histogram fittings (as in Fig. 6), the CIC + dark rate was about 0.0018 for a G=σ ratio of ∼30. The fitting of the SNR curves needed a CIC + dark level of 0.0023 in order to agree with the experimental data. This is also the mean event rate measured in the dark frames of the PC acquisition. This behavior is explained through CIC and dark electrons generated into the EM register. This is in agreement with the fact that the mean source of CIC in CCCP/CCD97 comes from the CIC that is generated into the horizontal register (Daigle et al. 2008). Given that the EM register sole existence is driven by the fact that it generates electrons, it is understandable that it generates CIC too. Dark electrons can also be generated in the EM register. The mean amplification of these CIC and dark electrons varies according to the elements into which the charge is created. The exact amount of CIC that is generated into the EM register is hard to compare at different G=σ ratios. At low G=σ, mostly all of these CIC events will end up in the readout noise, making it impossible to count them. So, it has been decided not to count them when comparing CIC levels. However, they must be accounted for in the SNR plots.

The effect of these lightly multiplied events will be stronger in the PC data than in the AM data. The PC-processed data see an event as an event, regardless of its multiplication factor. The AM-processed data, however, will see, on average, these events as having less than one input electron. Thus, the CIC + dark level computed in PC will differ from the one computed in AM. As an example, the CIC + dark level of the PC dark frames (0.05 s exposure time) of figure 12 is 0.0023. If these dark frames are processed in AM, the mean signal level measured is 0.0015 event/pixel/image, which is closer to the values presented in figure 6. Given these facts, the threshold used for the PC processing could be raised (6σ, 7σ) to avoid counting some of theses events. Of course, the proportion of counted photons would diminish. An optimum threshold, yielding the best SNR, could be calculated.

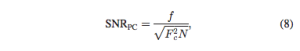

The image of the low light scene (Fig. 12, bottom panel) shows trailing electrons behind the most luminous pixels. The trail is about 2 orders of magnitude fainter than the mostly lit pixel on its line. This could be caused either by the low horizontal CTE in the EM register or the heating of the multiplication register when many electrons are generated into it. It is unlikely that this trailing happens in the conventional horizontal register as there is very rarely more than one electron per element in the PC image (the highest mean flux being about 0:5 photon pixel-1 image). Surprisingly, this effect is less visible in the AM image, where more electrons/pixel are gathered, given the tenfold increase in exposure time. This relation holds for even longer exposure times, as shown in Figure 13, where exposure times of 5 and 15 s frame-1 were used, at the same EM gain (AM processing). These frames also show that these trailing electrons are not the result of an overflow of the horizontal register since it would worsen as the exposure time increases and more electrons are gathered. This phenomenon, however, did not compromise the quality of the scientific data, as discussed in § 4.

CCCP/CCD97 was tested at the focal plane of the FaNTOmM integral field spectrograph (Gach et al. 2002; Hernandez et al. 2003). FaNTOmM is basically a focal reducer and a narrow-band interference filter (∼10 Å) coupled to a high resolution (R > 10000) Fabry-Perot (FP) interferometer. FaNTOmM falls into the same instrument category as the newer GHαFAS (Carignan et al. 2008; Hernandez et al. 2008). The FaNTOmM instrument is mostly used to map the kinematics of galaxies using the Doppler shift of the Hα line (such as in Hernandez et al. 2005; Chemin et al. (2006); Daigle et al.(2006b); Dicaire et al. (2008); Epinat et al. 2008). The Hα emission comes from both luminous HII regions and faint, diffuse Hα regions. There are a few strong sky emission lines caused by the OH radicals around Hα but there are many dark regions in the spectrum as well. Given the high spectral resolution of these observations, they are mostly read-noise limited instead of sky-background limited. Moreover, since a FP interferometer is a scanning instrument, it is of great interest to scan the interferometer many times throughout an observation to average the photometric variations of the sky. This requires many short exposures (typically 5–15 s between the moves of the interferometer). These kind of observations are perfectly suited for a photon-counting camera and this is the reason why FaNTOmM is usually fitted with an IPCS as the imaging device.

The mean drawback of the IPCS is its low QE, which is ∼20% for the case of the IPCS of FaNTOmM. However, the IPCS have a very low dark noise, typically of the order of 10-5 electron pixel-1s-1. The advantage of the EMCCD is obvious in terms of gain in QE if the CIC of the EMCCD is low enough (Daigle et al. 2004). The results obtained in lab with CCCP/CCD97 were promising. Engineering telescope time was obtained in 2008 September on the 1.6 m telescope of the Observatoire du mont Mégantic to test CCCP on real-world objects. During this run, the CCCP/CCD97 camera was used at the focal plane of the FaNTOmM instrument, in place of the IPCS.

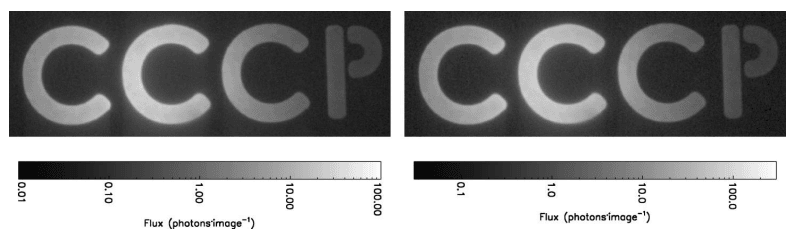

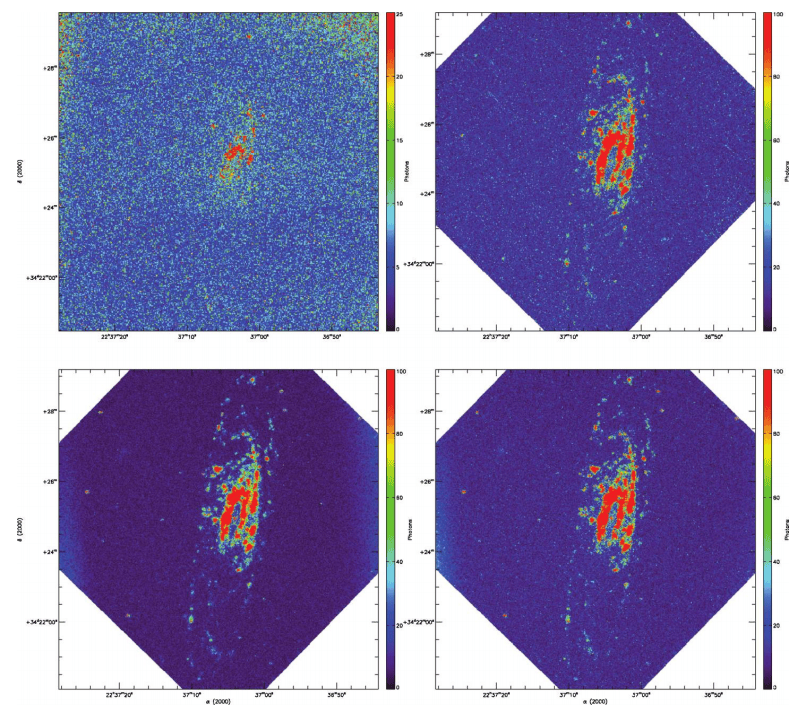

4.1. Observations

The galaxy NGC 7731 was observed during this run. This galaxy has already been observed with FaNTOmM/IPCS through the SINGS Hα survey (Daigle et al. 2006b). This observation will allow the comparison of the sensitivity of CCCP/ CCD97 with FaNTOmM/IPCS.

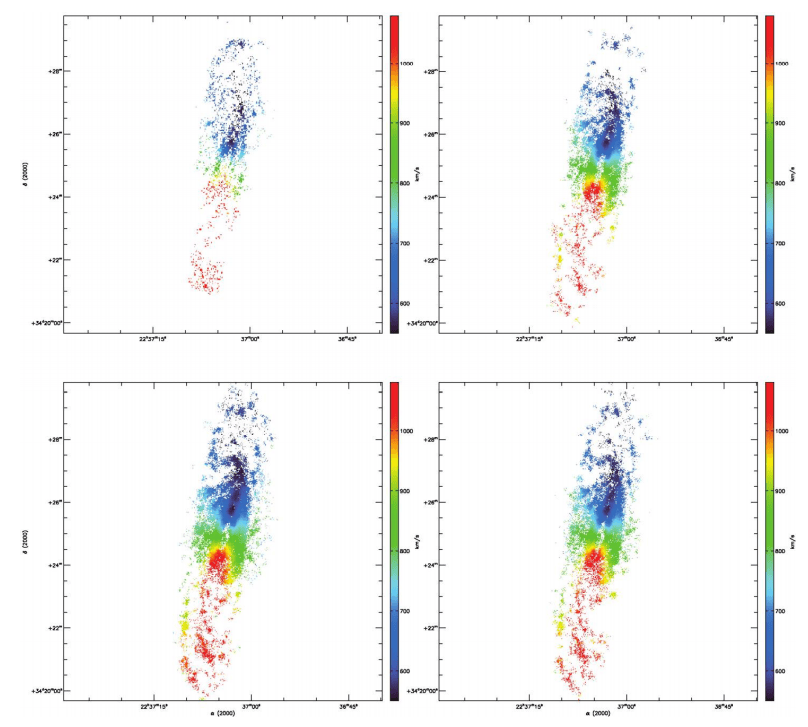

The parameters of the observations are presented in Table 1. All the data cubes were processed with the data reduction techniques presented in Daigle et al.(2006c). The sky emission was subtracted by a sky-cube fitting. No spatial smoothing was applied. The radial velocity (RV) maps were extracted and then cleaned by an automatic routine that correlates a pixel to the continuum and monochromatic flux-weighted value of the neighboring ones to determine its validity, based on a maximum deviation. Then, a manual cleanup removes the pixels whose RV has been incorrectly determined. This is usually due to some sky residuals that are polluting the pixel.

In Table 1, the plot of the mean flux per cycle is shown. This, coupled to the moon and sky conditions, gives a rough idea of the quality of the data. The moon was absent when the observation with the IPCS was made and the sky was mostly clear. Thus, the slight drop in the flux toward the end of the observation can be explained by absorption. On the other hand, the moon was mostly full (hence the engineering time) during the CCCP/CCD97 observations and there were a few cirrus. The cirrus were lit by the moon and this caused the flux to rise toward the end of the AM and at the beginning of the PC observation. Moreover, the moon’s light excites the OH radicals in the upper atmosphere and makes them glow brighter. This causes the sky-emission lines around Hα to be stronger, which, once subtracted, leaves a noisier background due to shot noise. The same thing happens once the brighter foreground caused by the clouds lit by the moon is subtracted. It thus takes more photons from the galaxy to reach a given SNR in the CCCP/ CCD97 observations. On the other hand, the interference filter’s transmission also changes from the IPCS to the CCCP/CCD97 observation because of the exterior temperature. Given its systemic velocity of 816 km s-1, NGC 7331’s rest Hα wavelength is at 6580 Å. Its Hα emission was better centered in the CCCP/CCD97 observation. On the IPCS observation, this should benefit the approaching side of the galaxy while weakening the receding side.

4.2. IPCS and CCCP/CCD97 Comparisons

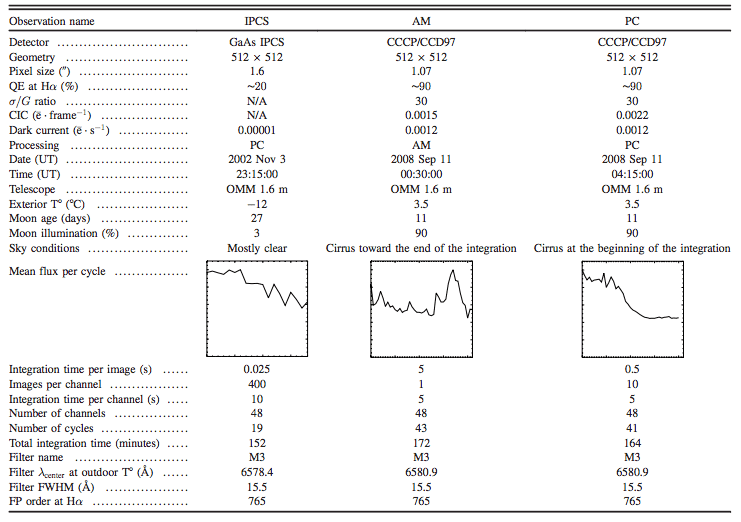

On the whole, it is a delicate matter to compare observations made under different environmental and photometric conditions. Nevertheless, the observations should give a first-order estimate of how the CCCP/CCD97 compares to the IPCS. The monochromatic intensity (MI) maps and the RV maps of all the observations are provided in Figures 14 and 15. One striking observation is that, even though the integration time is comparable between the IPCS and the CCCP/CCD97 observations, the galaxy’s approaching side is barely visible on the MI map of the IPCS. This is reflected in the RV map, which shows only a few sparse pixels from this side of the galaxy. By comparing with the CCCP/CCD97 observations, it is questionable whether these pixels are giving an accurate reading of the rotation velocity of this side of the galaxy. Even though the observation’s conditions were different, it is hardly conceivable that the IPCS observation could have gone deeper than the CCCP/CCD97 observation even in perfect conditions. Moreover, the colder temperature at which the IPCS data were acquired should benefit the approaching (red) side as compared to the CCCP/CCD97 observation.

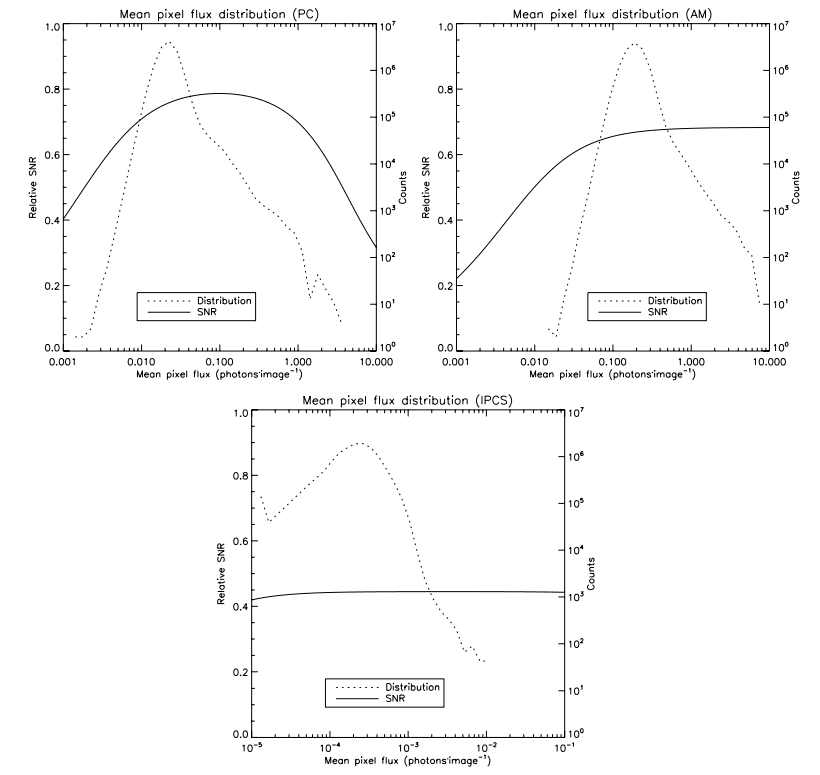

4.3. Efficiency

The efficiency at which the data were gathered is outlined by Figure 16. This figure shows the pixel’s mean intensity distribution in the data cubes superimposed on the expected SNR curve for that flux regime. It shows that all the observations were made in roughly the best conditions for the detectors and their modes of operation. The IPCS mean pixel flux is very low: the IPCS is operated at 40 frames s-1 but does not suffer from CIC. Thus, there is no loss at operating that fast

4.4. PC versus AM Processing

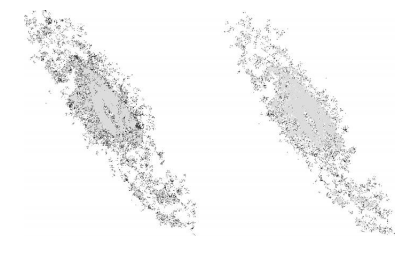

The observing conditions prevailing when the PC and AM data cubes were gathered were not stable enough to provide a convincing comparison. One could see that the background of the MI map of the PC observation is smoother (Fig. 14). The RV map of the AM observation looks “skinny” as compared to the PC one. On this side, it is better to rely on experimental data taken in lab in order to compare both operating modes with their respective integration time (§ 3.5). One comparison that is absolute, however, is the comparison of the PC and AM processing on the data acquired at 0.5 s frame-1 on CCCP/CCD97 (the PC observation). Since there are very few pixels having ∼1 photon image-1 (Fig. 16), the effective SNR of the data cube processed in PC should be higher than the one processed in AM. But how does this obvious fact influence the quality of the reduced data of NGC 7331? The bottom panels of Figures 14 and 15 shows, respectively, the monochromatic intensity and the RV maps of both the PC and AM processing of the PC observation. First, the monochromatic map of the PC processing shows a smoother and darker background, which means that the sky emission has been better removed. This is very important as it will allow the resolving of the radial velocity of fainter regions of the galaxy. Next, the RV map of the PC processing shows a more extended coverage of the signal. This extended coverage is seen as more pixels near the edge of HII regions and more sparse pixels in diffuse Hα regions. Figure 17 enhances these differences by drawing in black the pixels that are exclusive to the PC or AM RV map. There are far more pixels exclusive to the PC RV map than there are for the PC AM map. Thus, as expected, the PC processing yields a better, richer RV map than the AM processing.

A new camera built using CCCP, a CCD Controller for Counting Photons, and a scientific grade EMCCD CCD97 from e2v technologies was presented. This camera combines the high QE of the CCD with the subelectron readout noise of the EMCCD, allowing extreme faint flux imaging at an efficiency that is comparable to a perfect photon-counting device. Laboratory experiments showed that the camera behaved exactly as predicted by theory, in terms of relative SNR as a function of the flux, down to flux as low as 0:001 photon pixel-1 per image.

It has been shown that the CCCP/CCD97 camera yields very low CIC þ dark levels at high EM gain, which represents a significant advance in the sensitivity that an EMCCD camera equipped with a CCD97 can achieve. Moreover, it has been demonstrated that the CTE figure of the EMCCD can be better handled with CCCP. It is beyond the scope of this article to describe in detail the clocking that is applied to the CCD97 to yield the lower CIC and higher CTE. Interested readers are invited to read Daigle et al. (in preparation).

Both PC and AM processing were compared and it has been demonstrated that the PC processing effectively allowed the achievement of a better SNR, for the same data set. Evidence that the PC processing yields a better SNR than the AM processing, even at 10 times the frame rate, for the same total integration time, was presented. Given that the theory behind the SNR of an EMCCD seems well understood, one could argue that the PC processing should yield a better SNR at low and 1moderate fluxes, even at a higher frame rate, as compared to AM processing.

The performance of CCCP/CCD97 was compared to that of a GaAs photocathode-based IPCS on an extragalactic target in a shot noise-dominated regime. The achieved SNR of the CCCP/ CCD97 observations is superior to the one achieved with the IPCS. Though it is delicate to compare two independent observations taken at different photometric conditions, the results presented could hardly be due solely to the photometric conditions. The CCCP/CCD97 camera must be, at some level, more sensitive than the IPCS, for the flux regime at which the data were acquired.

A new camera that will use the second version of the CCCP controller is under construction at the LAE in Montréal. It will use a larger 1600 × 1600, non–frame-transfer EMCCD from e2v Technologies. This camera will be used as the high-resolution camera at the focal plane of the 3D-NTT instrument (Marcelin et al. 2008) that is expected to see first light at the end of 2009.

O. Daigle is grateful to the NSERC for funding this study though its Ph.D. thesis. We would like to thank the staff at the Observatoire du mont Mégantic for their helpful support, and the anonymous referee for valuable comments.

Notes

6 For CCCP/CCD97, two frame transfers were done before reading out, yielding a 0 s integration time. However, dark signal is generated during the readout.

7 Interested readers are invited to go to http://www.astro.umontreal.ca/ odaigle/ emccd to read more about it and see the IDL code.

8 In the datasheet available at https://www.andor.com/download/ download_file/?file=L897SS.pdf.

9 The measurements of the bad events involved about a thousand dark frames, in addition to the zoomed region of Figure 8.

Basden, A. G., Haniff, C. A., & Mackay, C. D. 2003, MNRAS, 345, 985

Carignan, C., Hernandez, O., Beckman, J. E., & Fathi, K. 2008, Pathways Through an Eclectic Universe, ASP Conf. Ser. 390, ed. J. Knapen, T. Mahoney, A. Vazdekis, 168

Chemin, L., et al. 2006, MNRAS, 366, 812

Daigle, O., Gach, J.-L., Guillaume, C., Carignan, C., Balard, P., & Boisin, O. 2004, Proc. SPIE, 5499, 219

Daigle, O., Carignan, C., & Blais-Ouellette, S. 2006a, Proc. SPIE, 6276, 62761F

Daigle, O., Carignan, C., Amram, P., Hernandez, O., Chemin, L., Balkowski, C., & Kennicutt, R. 2006, MNRAS, 367b, 469

Daigle, O., Carignan, C., Hernandez, O., Chemin, L., & Amram, P. 2006c, MNRAS, 368, 1016

Daigle, O., Gach, J.-L., Guillaume, C., Lessard, S., Carignan, C., & Blais-Ouellette, S. 2008, Proc. SPIE, 7014, 70146

Dicaire, I., et al. 2008, MNRAS, 385, 553

e2v Technologies 2004, Technical Note 4

Epinat, B., et al. 2008, MNRAS, 388, 500

Gach, J.-L., et al. 2002, PASP, 114, 1043

Gach, J.-L., Guillaume, C., Boissin, O., & Cavadore, C. 2004, Scientific Detectors for Astronomy, 611

Hernandez, O., Gach, J.-L., Carignan, C., & Boulesteix, J. 2003, Proc. SPIE, 4841, 1472

Hernandez, O., Carignan, C., Amram, P., Chemin, L., & Daigle, O. 2005, MNRAS, 360, 1201

Hernandez, O., et al. 2008, PASP, 120, 665

Janesick, J. R. 2001, Scientific Charge-coupled Devices (monogr. PM83; Bellingham, WA: SPIE Optical Engineering Press) 906

Lantz, E., Blanchet, J.-L., Furfaro, L., & Devaux, F. 2008, MNRAS, 386, 2262

Mackay, C., Basden, A., & Bridgeland, M. 2004, Proc. SPIE, 5499, 203

Marcelin, M., et al. 2008, Proc. SPIE, 7014, 701455

Robbins, M. S., & Hadwen, B. J. 2002, IEEE Trans. Electron Devices, 50, 1488R

Tulloch, S.et al. 2006, Scientific Detectors for Astronomy, 2005, 303

Tulloch, S. 2008, High Time Resolution Astrophysics: The Universe at Sub-Second Timescales, 984, 148

Wen, Y., et al. 2006, Proc. SPIE, 6276, 62761H